8,000+ ChatGPT API Keys Exposed Across GitHub & Production Sites

The recent discovery by Cyble Research should serve as a massive wake-up call for network security teams and engineering leaders globally. Security researchers uncovered over 5,000 public GitHub repositories and 3,000 live production websites actively exposing hardcoded ChatGPT API keys. This staggering figure is not merely a collection of isolated developer oversights; it represents a systemic breakdown in modern network and repository security.

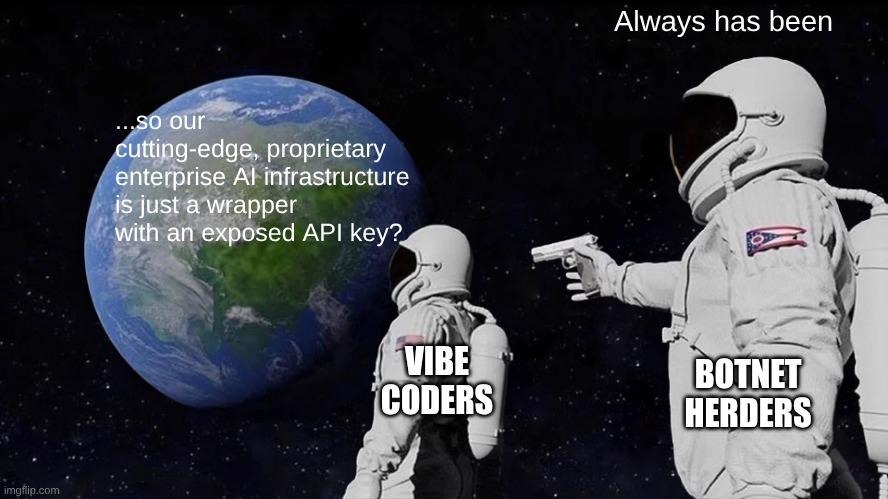

As organizations scramble to integrate generative AI, foundational security hygiene is being left behind in the dust. This phenomenon is largely driven by a dangerous new trend known as "vibe coding": a speed-over-security culture where the frantic rush to ship generative AI features causes teams to completely bypass standard security protocols. In this modern gold rush, highly sensitive AI credentials are being treated as disposable toys for experimentation rather than what they truly are: critical production secrets.

The anatomy of an AI credential leak

A leaked API key’s descent into a life of crime typically begins innocently enough during the rapid prototyping phase of vibe coding. A developer, eager to test a new ChatGPT integration, casually hardcodes an API key into their local testing environment. In the rush to push features live, this temporary shortcut is forgotten, making its way directly into client-side JavaScript or a public GitHub commit. Once that code is pushed, the security perimeter is effectively breached.

The timeline between exposure and exploitation is terrifyingly compressed. Automated malicious scanners and competitor bots constantly scrape public repositories and live websites, looking for exactly these types of text strings. Consequently, exposed credentials are often harvested within hours, if not minutes, of a single insecure commit.

Once these keys are scavenged, the technical barrier to entry for threat actors is dangerously low. Stolen OpenAI tokens are rapidly weaponized to fuel a variety of downstream criminal activities. Here is how attackers typically exploit these exposed keys:

- Massive phishing content generation: Threat actors use the compromised enterprise AI access to draft highly convincing, localized phishing emails at an unprecedented scale.

- Automated scam scripts: Scavenged keys power malicious chatbots designed to defraud users or distribute malware.

- Social engineering lures: High-tier AI access is leveraged to create deepfakes or sophisticated textual lures tailored to compromise specific corporate targets.

The new class of shadow IT risk

The rise of vibe coding has introduced a formidable new challenge for CTOs and CISOs known as "Shadow AI". Rogue, unvetted AI integration experiments driven by individual developers or siloed teams frequently bypass standard network security and procurement vetting. This creates massive, unmonitored blind spots across the enterprise, leaving security teams entirely unaware of where and how AI is being utilized within their own networks.

The financial toll of unchecked AI service abuse

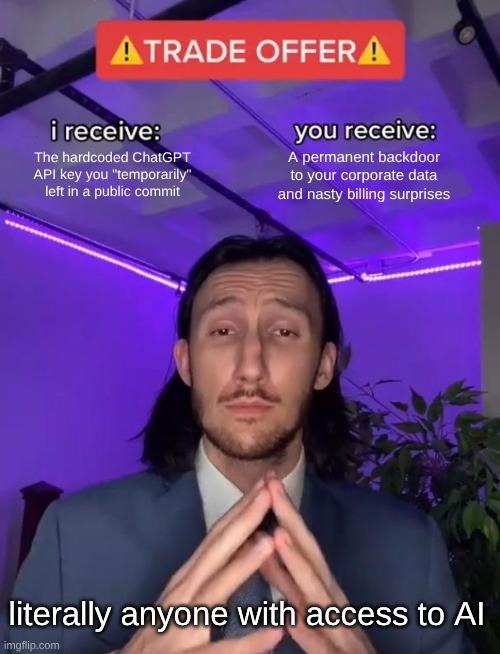

The financial ramifications of this unchecked service abuse at scale are severe. When threat actors acquire stolen, high-tier corporate keys, they do not hesitate to execute high-volume inference workloads under the victim's billing umbrella. This drains victim billing accounts and rapidly exhausts API credits, leading to unpredictable and potentially ruinous cloud bills that can devastate an IT budget overnight.

The persistent threat of client-side exposures

This threat is highly persistent, particularly when looking at the 3,000 live production sites identified by the Cyble report. Client-side exposures are incredibly dangerous; unlike a repository leak that might be quickly rolled back or patched, website-based exposures continuously leak secrets to anyone inspecting the application's source code. This acts as a permanent, silent backdoor into the company's AI resources.

Compromised code repositories as network pivot points

When public code repositories are compromised, the broader network security implications become dire. Stolen API keys can serve as a highly privileged pivot point for attackers. By exploiting these keys, bad actors can potentially access internal corporate data fed into the AI, manipulate proprietary datasets, or probe deeper into the organization's wider cloud infrastructure.

Why AI tokens are the new master keys

It is crucial to contrast traditional cloud access keys with modern AI API keys. While traditional keys often have narrowly defined scopes, AI tokens are essentially master keys to powerful inference engines and vast computational billing resources. They hold immense power and must be governed with the exact same rigor, visibility, and strict access controls as core enterprise identity credentials.

Defending code repositories and networks in the AI era

To defend against these modern threats, VPs of Engineering must implement a mandatory technical roadmap to permanently eliminate secrets from client-side code and public-facing assets. This requires a fundamental shift away from hardcoding, prioritizing the implementation of robust secrets management platforms and the use of dynamic environment variables that are specifically tailored for fast-paced AI workflows.

In its current incarnation, AI (whether agentic or LLM) is inherently insecure due to its lack of distinction between execution code and input data. Code repositories must be defended with proactive secure-at-inception guardrails. Organizations must argue for, and strictly enforce, mechanisms that stop leaks before they happen. To achieve this, engineering leaders should deploy the following controls:

- Pre-commit linting: Developers are forced to resolve potential secret exposures locally before code can even be prepared for a commit.

- Automated secret-scanning tools: Continuous scanning of repositories ensures that any accidental key exposure is immediately flagged and alerted upon.

- Hard Git hooks: By implementing strict pre-receive hooks, organizations can outright reject any commit containing strings that match known credential formats.

Beyond the codebase, critical access control and network containment policies must be established specifically for AI integrations. Development teams must be instructed to apply the principle of least privilege to all AI keys. Furthermore, they should enforce strict IP allowlisting where possible to restrict API calls to known corporate servers, and configure hard usage quotas to significantly limit the blast radius if a key is ever compromised.

Fostering a culture of secure innovation with Vicarius

Shifting organizational culture away from reckless "vibe coding" without stifling innovation requires actionable, systemic changes. Foremost among these is the absolute necessity of comprehensive asset discovery and real-time inventory tracking. Organizations cannot secure what they cannot see; therefore, gaining total visibility over the network is required to bring rogue AI experiments and shadow IT back under central, unified governance.

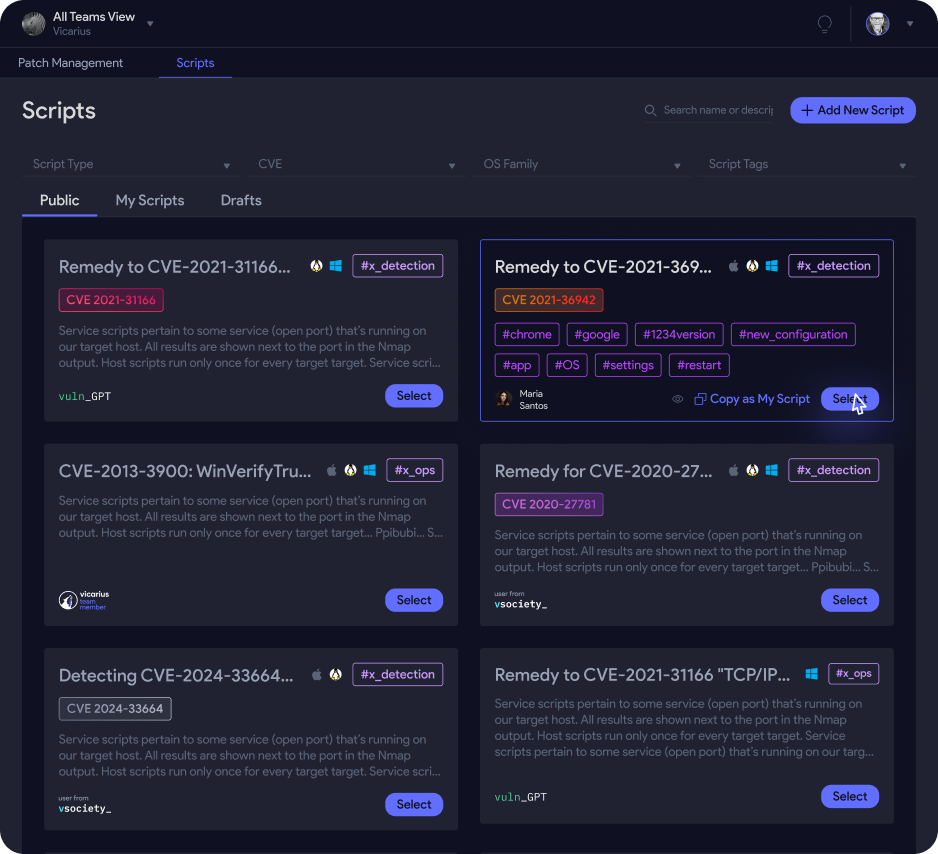

This raises a critical question: How can organizations secure rapid AI deployments when traditional remediation cycles and code rollbacks are inherently too slow? vRx by Vicarius directly addresses this dilemma. It utilizes AI-driven, contextual risk prioritization to cut through alert noise, allowing teams to focus on active threats rather than theoretical vulnerabilities. Furthermore, it leverages built-in scripting alongside in-memory Patchless Protection to instantly neutralize exposed credentials and vulnerable integrations without requiring a source code change or system reboot. By automating these immediate defenses, we help you turn security from a developmental bottleneck into a distinct competitive edge.

From vulnerable experimentation to resilient execution

Recent events prove unequivocally that the current AI gold rush has created a massive, easily exploitable credential problem. The era of vibe coding has prioritized speed over safety, but the cost of that trade-off is now clear. Executives must recognize that fiercely protecting code repositories and treating AI credentials as highly sensitive production secrets is no longer optional; it is the absolute baseline for safe, sustainable innovation. Don’t wait for an exposed token to drain your resources or compromise your network. Take immediate action by booking a personal demo of vRx to see firsthand how you can automatically discover, prioritize, and remediate exposed API keys, vulnerable assets, and shadow AI risks before they fuel the next massive breach.