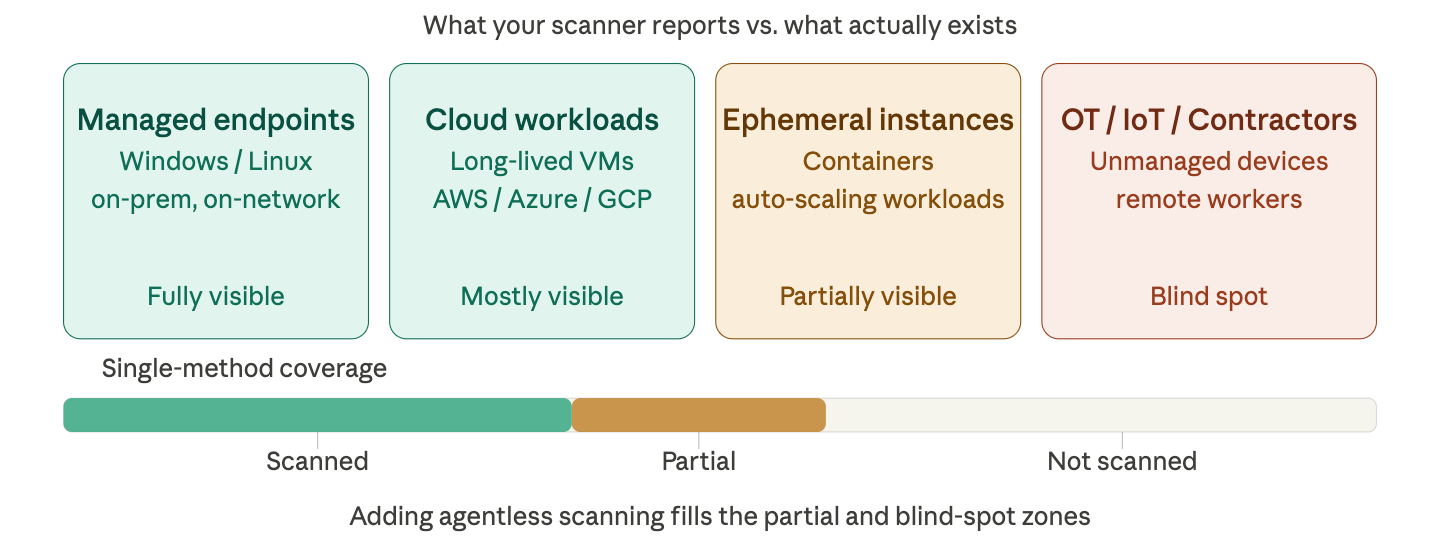

Your vulnerability program is only as good as what it can see. And if you are running a single scanning method across a mixed enterprise environment, there are entire categories of assets it cannot see at all.

This is not a vendor pitch. It is a structural problem that follows from how each scanning approach works. Agent-based scanning provides deep, continuous visibility into every managed system where an agent is deployed. Agentless scanning provides broad, fast coverage across everything reachable on the network or via cloud APIs. Neither is a superset of the other. The assets each method misses are different and often the most dangerous ones.

CISOs evaluating vulnerability management platforms need to ask one question before anything else: does this tool see my entire attack surface, or just the part I already manage well?

How each method works

Agent-based scanning works by running software directly on each host, giving it privileged access to configuration files, running processes, and file system data. Agentless tools instead use cloud provider APIs, disk snapshots, and network protocols to assess systems from the outside; they never log into the machine or run code inside it. Wiz

The practical consequence: agentless tools answer "what could be attacked" more than "what is being attacked right now," while agent-based tools shine at runtime threat detection and behavioral analysis watching what is actually happening on the host. Wiz

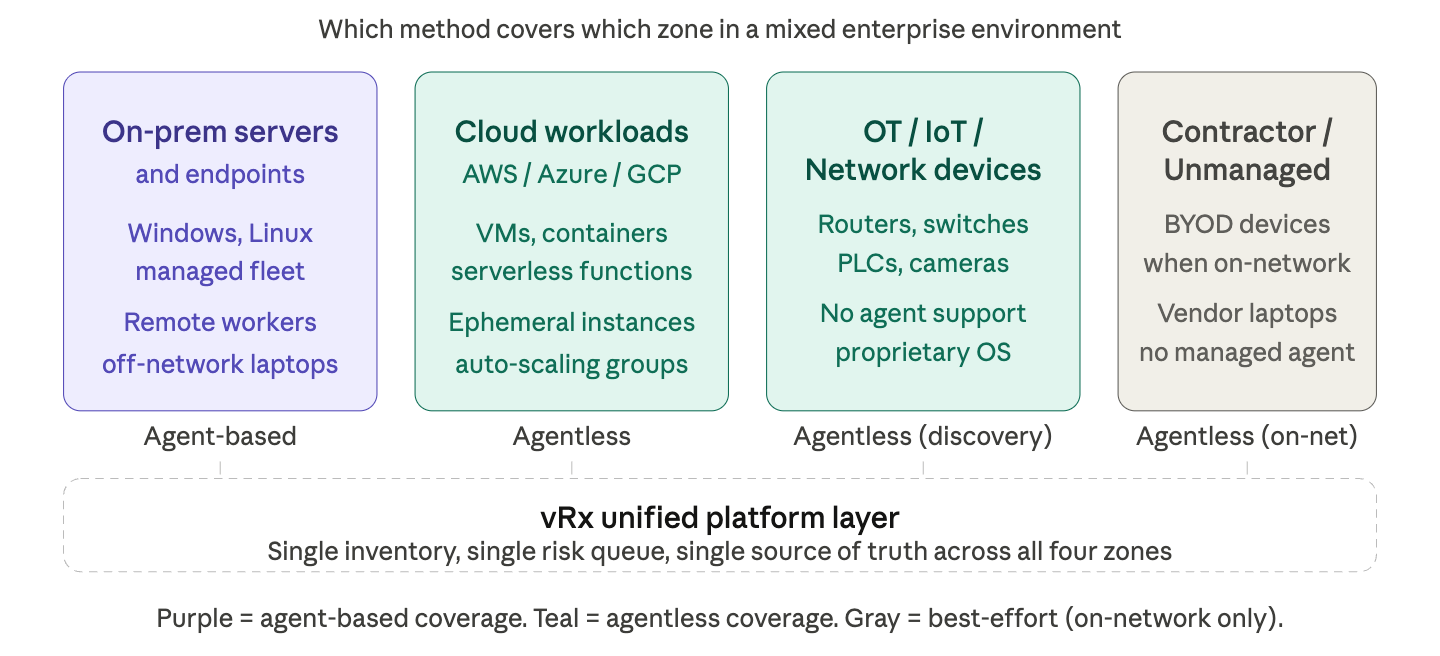

The corrected coverage picture

The original framing of this debate is usually too clean. Here is what the research actually shows across each asset class with the real nuances included:

Two corrections worth calling out explicitly from the original table. First, remote and off-network endpoints laptops behind home networks are invisible to agentless scanning entirely. Agentless scanning requires a consistent network connection, making it unsuitable for remote workers behind home networks whose devices are not accessible to the scanner. This is a significant nuance in a post-2020 workforce. Second, OT and IoT devices: agentless scanning can identify IoT devices and network devices such as routers and switches that do not have an OS that supports agents, but the vulnerability depth on those devices is limited. These are not fully "discoverable" they are network-visible, but the vuln data returned is shallower than on standard endpoints, unless an authenticated scan can be performed and then the output is nearly the same as with an agent but still depends on the scan interval.

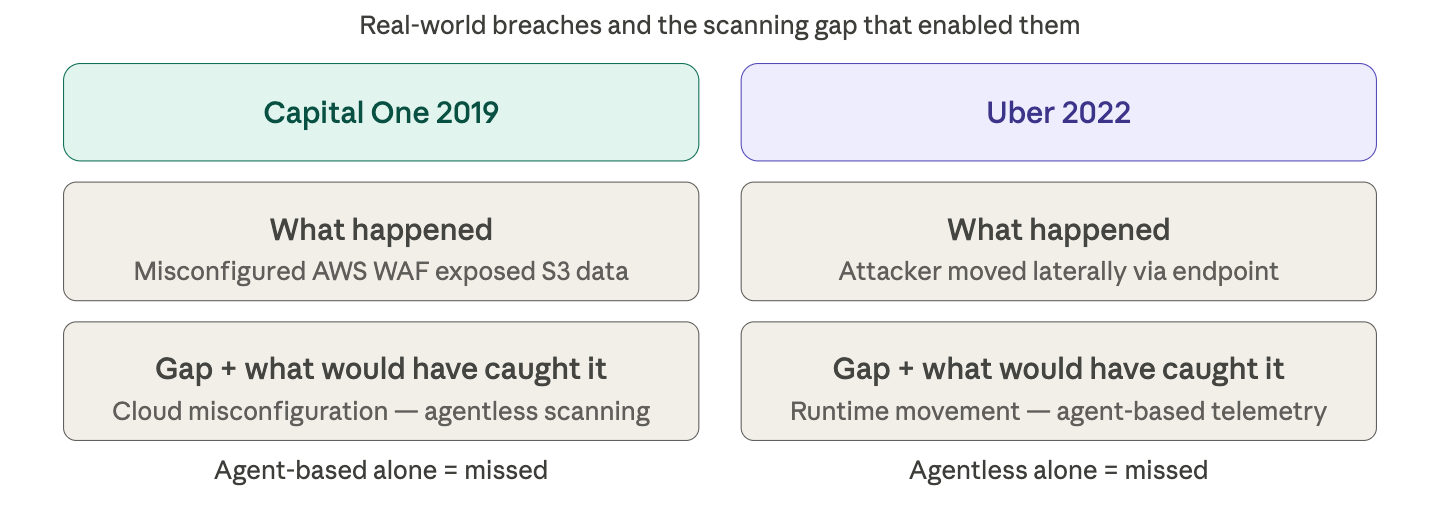

The blind spots that get exploited

Neither gap is theoretical. In Capital One's 2019 breach, a misconfigured AWS WAF exposed S3 data that agentless scanning could have caught pre-breach. In Uber's 2022 breach, runtime telemetry from an agent-based sensor could have caught the lateral movement. The pattern is consistent: different attack types exploit whichever gap the organization left open.

The reason this matters operationally is that compliance and audit needs can push organizations toward one approach or the other, creating a false sense of coverage. SOC 2 and ISO 27001 requirements can be satisfied by agentless cloud-level scanning on paper but that does not mean runtime behavioral threats on managed endpoints are covered. The audit passes; the blind spot remains.

What "combined" actually requires

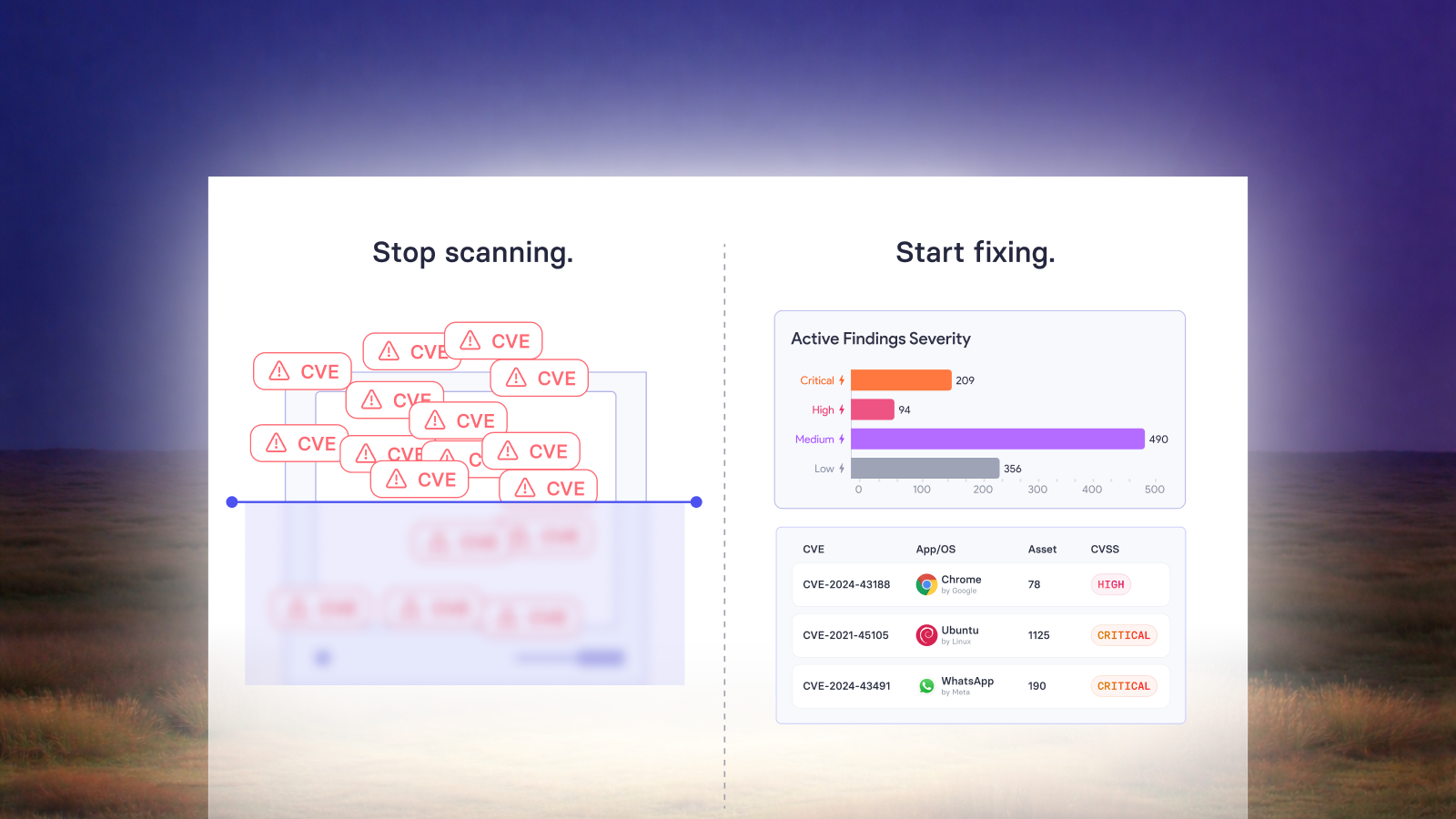

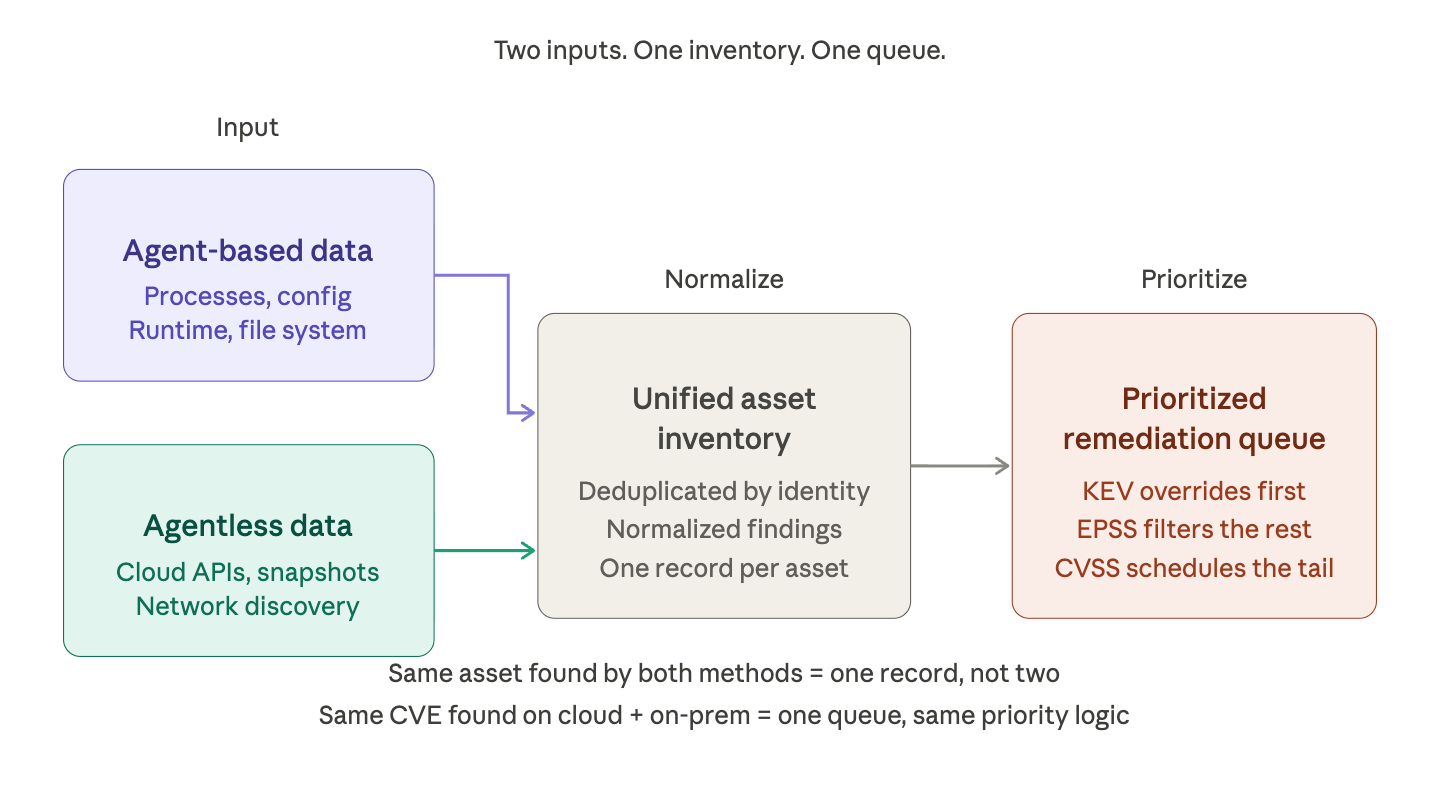

Running two separate scanning tools and merging CSV exports is not a combined approach. It is a data reconciliation problem disguised as a security program.

A genuine multi-method architecture requires three things that most point solutions cannot provide:

First, unified asset identity. Combining both scanning methods ensures broad coverage and rich data, especially in hybrid and remote environments, but only when the platform can match an agentless-discovered device to its agent-managed counterpart without creating duplicate records. IP addresses change. Hostnames drift. If your platform treats them as separate assets, your vulnerability count is inflated and your remediation metrics are meaningless.

Second, a single risk view. Findings from both methods need to be normalized and prioritized together. A CVSS 9.8 found agentlessly on a cloud workload and a CVSS 9.8 found by an agent on a managed server should appear in the same remediation queue, with the same prioritization logic applied.

Third, coverage reporting that is honest about gaps. The most dangerous artifact of running only one scanning method is false confidence. A platform that shows "12,000 vulnerabilities under management" without disclosing that it only covers 60% of your asset inventory is not a risk management tool it is a liability.

The operational reality for mixed enterprise environments

For environments spanning on-prem infrastructure, cloud workloads, OT networks, and contractor devices which describes most enterprises today the combined approach is not optional. It is the minimum viable architecture for defensible risk management.

Agent-based systems are a prudent choice for mission-critical on-premises systems where security and reliability are paramount, while agentless security is more flexible and scalable for networks hosting disparate devices. The practical recommendation from practitioners is consistent: use agentless scanning to get organization-wide coverage, asset inventory, and configuration and vulnerability checks across all cloud accounts, then deploy agents on a targeted set of critical workloads that require deep runtime inspection.

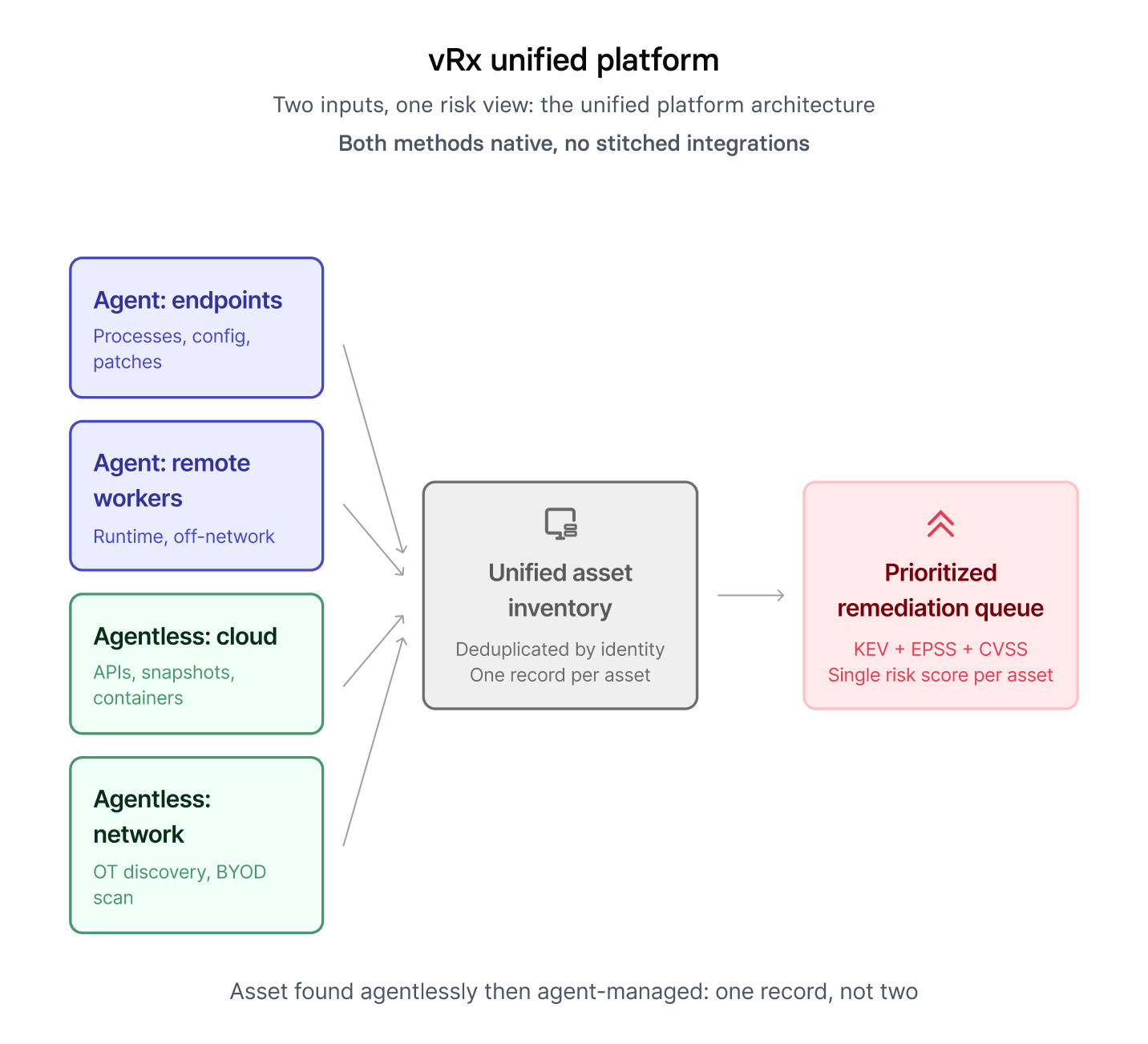

That is the architecture. The question is whether your platform supports it natively or forces you to build it from stitched-together integrations.

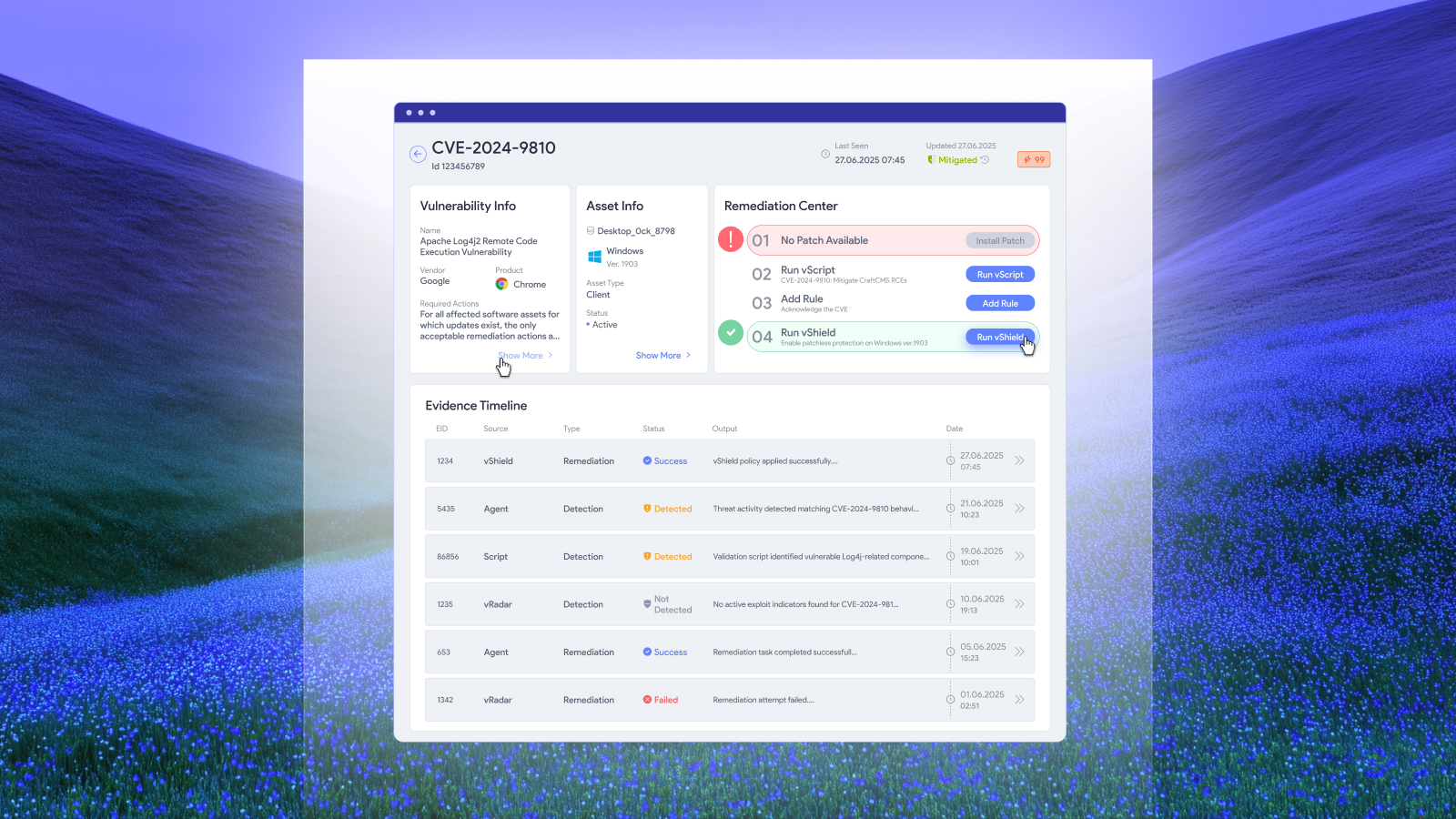

Why vRx was built to do both

vRx by Vicarius was designed around this exact architecture: agent-based and agentless scanning as native capabilities within a single platform, feeding a unified asset inventory. When a device is discovered agentlessly and later brought under agent management, it does not create a duplicate record. Vulnerability findings from both sources are normalized and prioritized in one queue, using the same risk scoring logic.

For a CISO managing a mixed environment, buying two specialized tools and reconciling their outputs creates exactly the data integrity problems described above: the inventory drifts, deduplication breaks down at scale, and the board report looks clean while actual coverage has holes.

The main fix was removing the phrase 'this matters because the alternative of,' which was a roundabout way to get to the point. The sentence now opens directly with the problem.

The platform question is not "agent-based or agentless." It is "which platform gives me both, natively, without making my team manage two data models."

The evaluation question to bring to any demo

Before committing to any vulnerability management platform, ask the vendor to show you a coverage report that includes assets their tool did not find, not just the ones it did. Any platform that cannot distinguish between "asset not vulnerable" and "asset not scanned" is not providing a risk picture. It is providing a snapshot of the assets it already knew about.

If you want to see what your actual attack surface looks like across agent-managed endpoints, cloud workloads, and unmanaged devices in a single view, book a demo with the vRx team at vicarius.io. We will run a coverage assessment against your current environment and show you what your existing tool is missing.

Also read:

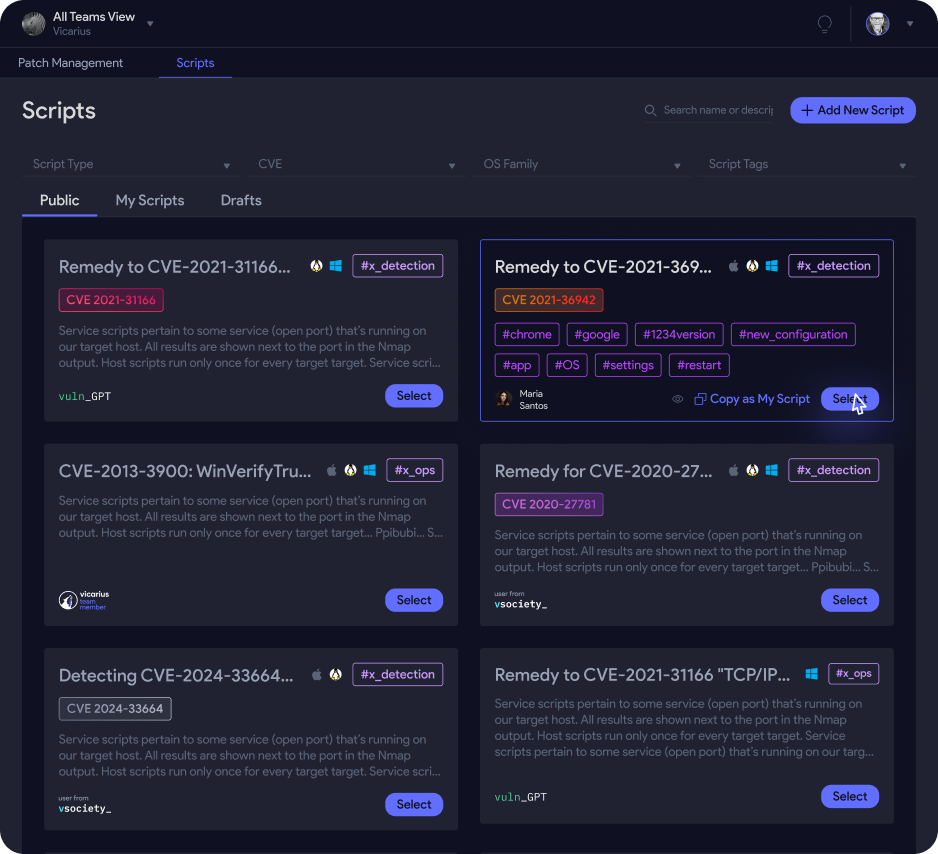

6 tasks Vicarius automates better than your current vulnerability platform

When Patching isn't enough: how vRx's Scripting Engine closes the Remediation gap